Every week someone publishes a new model comparison. Every week the internet argues about benchmarks. Meanwhile, the operators actually making money with AI stopped having that conversation months ago.

The Benchmark Trap

There’s a very specific way to waste 2026.

It goes like this: a new model drops. You read the benchmark comparisons. You switch. Another model drops. You run your tests. You spend an afternoon in a Reddit thread arguing about which one “reasons better.” Nothing ships.

This is not a hypothetical. It’s the dominant behavior pattern in every AI community right now. And the people stuck in it are optimizing a variable that stopped mattering.

Here’s the tell: Haiku 4.5 runs at $1 per million input tokens. Sonnet 4.6 is $3. Opus 4.7 is $5. DeepSeek V3.2 — a model that scores competitively on SWE-bench and handles a 1 million token context window — costs $0.14 per million input tokens. That’s 35x cheaper than Anthropic’s flagship. If the model were the product, every serious operator would have switched already and the conversation would be over.

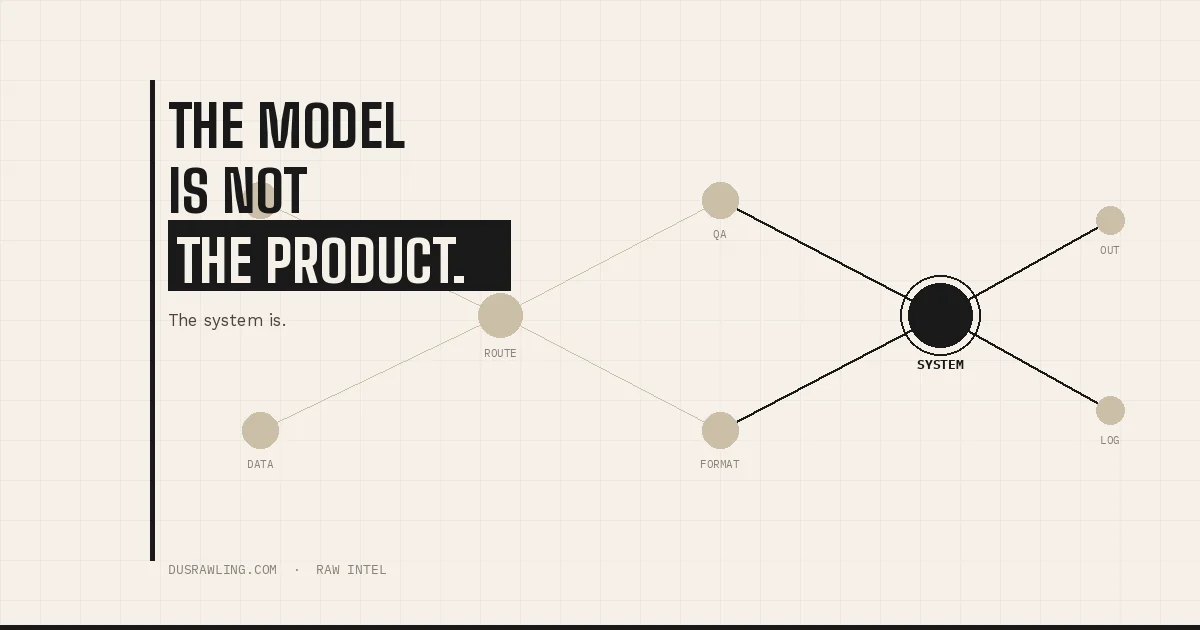

They haven’t. Because the model is not the product.

Once you have a real system, swapping the model is a 10-minute config change. It stops being a decision and becomes a parameter. The people still arguing about models are telling you, without meaning to, that they haven’t built the system yet.

What the Top 20% Are Actually Building

PwC published a study this month across 1,217 senior executives in 25 sectors. The finding that matters: 74% of AI’s economic gains are going to 20% of organizations.

The defining characteristic of that 20% is not model selection. It’s this: they are twice as likely to redesign workflows around AI rather than layer AI on top of what they were already doing. They’re 2.8 times more likely to have removed human intervention from decisions where human involvement adds no value — while simultaneously going further on oversight for decisions that actually require it.

That is not a technology finding. That’s an architecture finding.

The same split is visible at the individual operator level — the creator, the solo builder, the digital product seller. One group is running prompts in a chat window and calling it a workflow. The other has made a specific set of decisions about what their system does at each step: what triggers the next action, what format the output takes, where it goes, what the quality check looks like.

Those decisions, made once and encoded into a repeatable chain, are what turn inconsistent output into reliable infrastructure. The model is one node in that chain. It is not the chain.

IBM’s Chief Architect of AI put it plainly earlier this year: “If you go to ChatGPT, you are not talking to an AI model. You are talking to a software system that includes tools for searching the web, doing all sorts of different individual scripted programmatic tasks, and most likely an agentic loop.”

That’s already true at the product level. The people pulling results right now are making it true at their operator level too.

What Most People Are Still Missing

Here’s the uncomfortable implication.

Most people consuming AI content in 2026 are being served content by people who also haven’t built a real system yet. “Which model is best” gets clicks because it maps to a familiar consumer behavior: compare options, pick the winner, feel informed.

Orchestration doesn’t have that property. You can’t comparison-shop a workflow architecture the same way you comparison-shop a model release. There’s no leaderboard. There’s no benchmark. There’s just: does your chain produce consistent, usable output without you in the loop every single time?

That illegibility is exactly why the opening exists. Most people haven’t crossed it. The ones who have aren’t writing tutorial content — they’re using the asymmetry while it lasts.

What crossing it actually requires is not exotic tooling. n8n has a free self-hosted tier, and the paid cloud version starts at $20/month. Make’s free plan handles 1,000 operations a month before you see a bill. You can connect Sonnet 4.6 to your own data and your own output formats for well under $50/month in total infrastructure costs. The tooling cost is not the bottleneck.

The bottleneck is a mental model shift: from “I use AI” to “I run a system and the model is one component of it.” That shift changes what you build, what you charge for, and what you can reliably deliver.

A content operator who has made that decision once — for their research loop, their drafting loop, their distribution sequence — doesn’t have a workflow. They have infrastructure. Infrastructure that runs while they’re building the next thing.

A digital product seller who has wired their email funnel, customer intake, and delivery sequence into a defined chain isn’t hustling harder. They’ve removed themselves as the rate-limiting step in their own business.

That’s the actual output of getting orchestration right. Not a smarter prompt. A business that doesn’t require you to run it manually every single time.

The benchmark debate will keep running. New models will keep dropping. The content about which one is best will keep getting clicks.

Meanwhile, the people who figured out that the system was always the product are already two laps ahead. Not because they had better tools. Because they asked a different question.